NVIDIA announces their next generation card, the GeForce GTX 680

posted:

3/22/2012 4:00:00 AM

More On:

GeForce GTX 680

NVIDIA today is releasing their next generation card and following their current numbering convention, the GeForce GTX 680 is their newest king of the hill. It's a GPU they've been working on for a couple years and they've made many strides in giving consumers a great performance per watt experience.

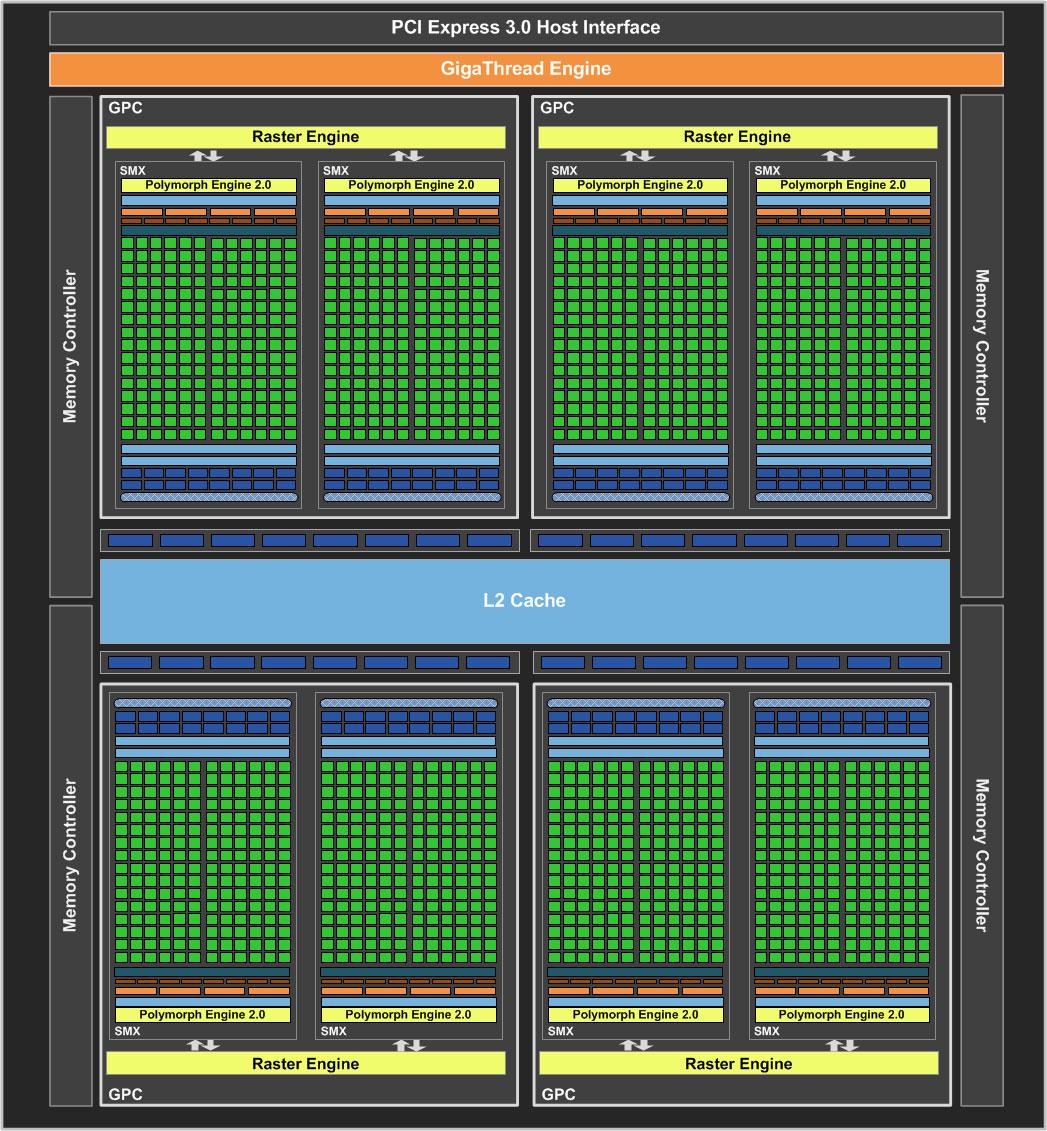

They're aiming for speed and efficiency for this card. The new architecture for the card is the SMX architecture. The 580 was made up of an SM module with 32 cores and a control logic. Control logic located at the top figures out where to route the instructions and to which core.

The SMX architecture offers 2X performance per watt over the previous generation card. The space that the control logic took up has been reduced greatly and cores have been upped to 192 cores. 8 of these SMX units are on the GTX 680 giving you a total of 1536 cores.

The design was improved with high efficiency embedded heat pipes, a custom fin stack shaped for better air flow, and acoustic dampening materials in the fan. The fin design allows for making the board shorter, which is very much appreciated as I as getting really tired of these long high end cards taking up so much room in my computer case. They spent a good amount of time on the board design to not only make it powerful, but keep it cool, quiet, and short.

Epic showed off the Samaritan demo using 3 GeForce GTX 580s back in GDC 2011. The demo shown at GDC 2012 now used a single GeForce GTX 680. The 3 GTX 580 cards used 732W of power and generated heat of 2500BTUs with a noise level of 51dBA. One GTX 680 used 195W of power generating 660BTUs and a noise level of 46dBA; a vast improvement. The combination of architecture, some software optimizations, and working with Epic allowed for the same visual quality but using a single card.

The GTX 580 needed an 8 pin and a 6 pin connector using 250W TDP. The GeForce GTX 680 needs 2 six pin connectors so less power needed for a much more powerful card. NVIDIA recommends a 550W power supply, so you don't have to go break the bank on purchasing one to use with this card.

On average, NVIDIA is saying the GeForce GTX 680 has a 20-40% performance increase over AMD's Radeon HD7970 while consuming up to 40% less power. It remains to be seen if this is true, but if it comes close to that claim, then it's a big win for NVIDIA.

To help improve performance, NVIDIA GPU Boost is something new added for this card. At a high level, it automatically boosts the clock speed. So no more fixed GPU clock and based on power in game, they are able to boost it up. Previously, they measured the power for various games and set the clock, the fixed clock, based on the worst case scenario. Most of the time it's not even a game, but a benchmark that uses the most power. What you would get is setting a clock based on a benchmark and when you play games, your power is often significantly less. On previous cards, you had extra power budget wasted by not being able to increase your clock. The ramping up of the clocks can utilize the unused power budget to offer more performance. It just works and no profile is needed by measuring a bunch of parameters dynamically in the game.You can still overclock on your own if you want it so for those that want to tweak it more, you still have that option.

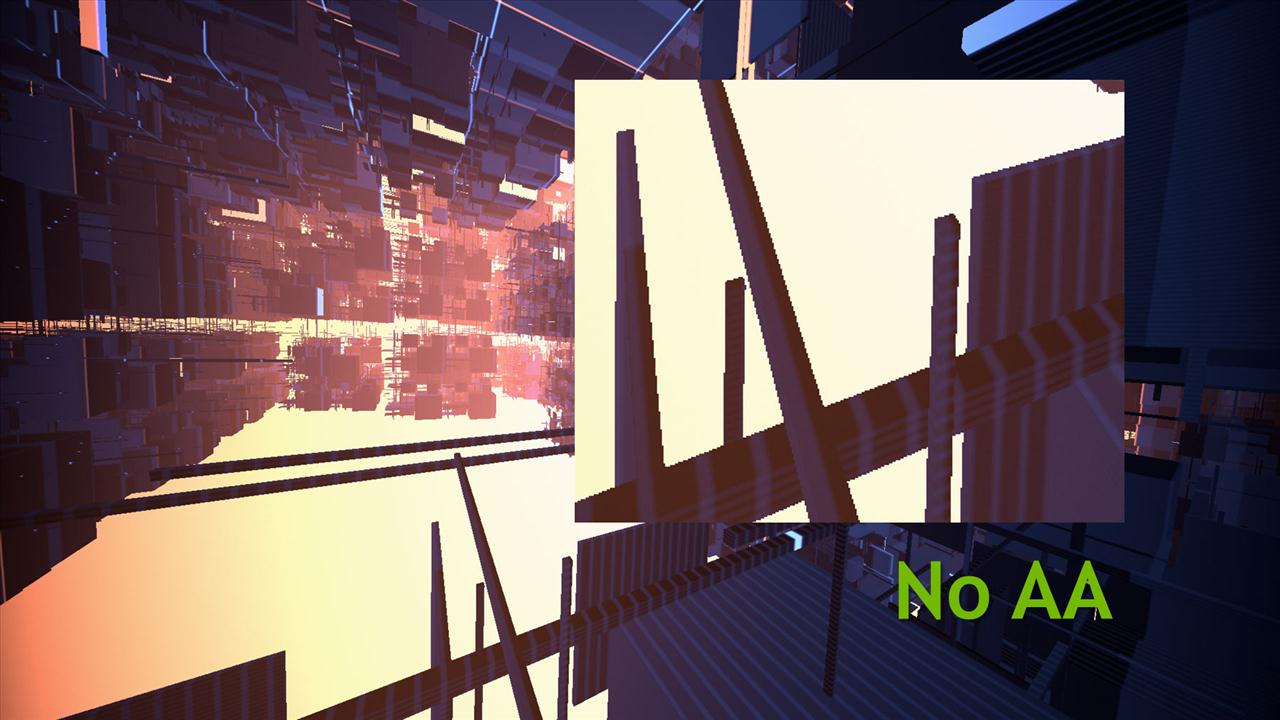

Let's talk a little bit about picture quality. Anti-aliasing is used to fix the jagged areas in a given image. FXAA was an anti-aliasing technology from NVIDIA that debuted last year. MSAA is what was used mostly and does a great job, but not when where there are high contrast areas. Say you have a dark object in front of a bright background. You'll see the jagged edges a lot more with MSAA. With FXAA, the smoothing is improved and the performance hit is significantly less than turning on 4x MSAA. NVIDIA is claiming that you'll get 60% speed over 4x MSAA when using FXAA. Some games have implemented FXAA in games, but with release 300 drivers, FXAA is available in the control panel so you can use it for any game that you want, rather than those that just programmed into it.

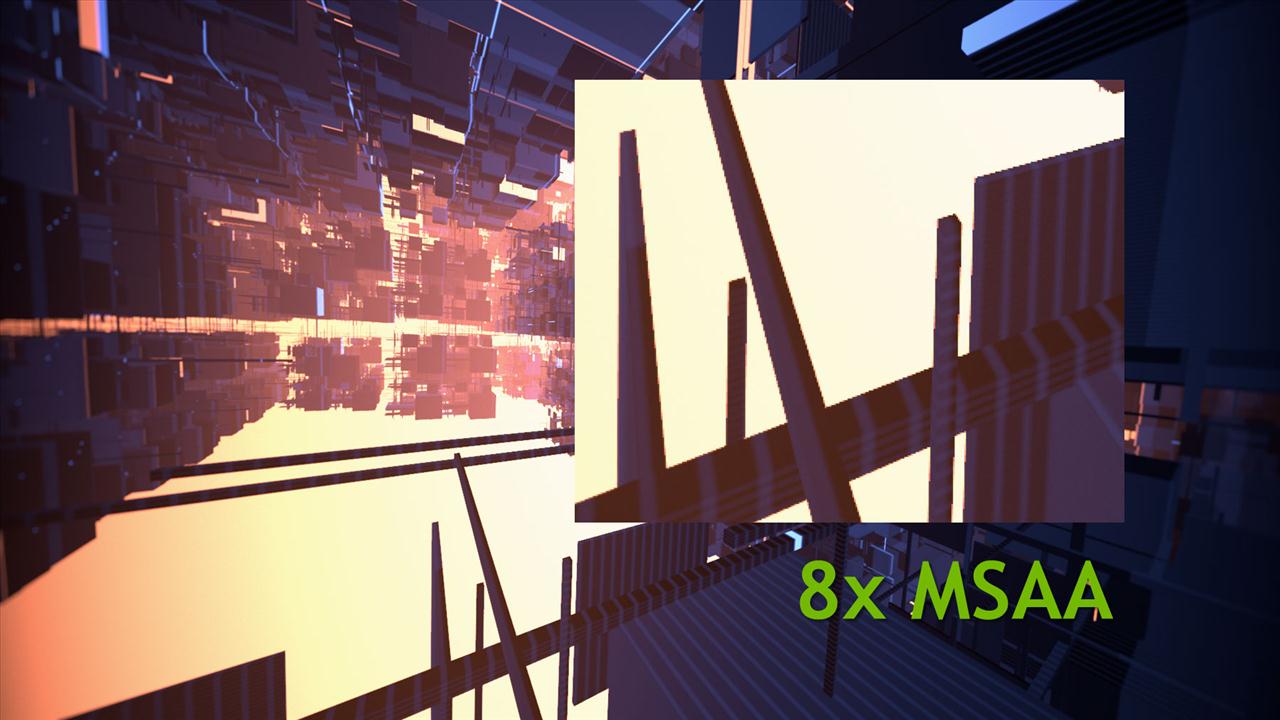

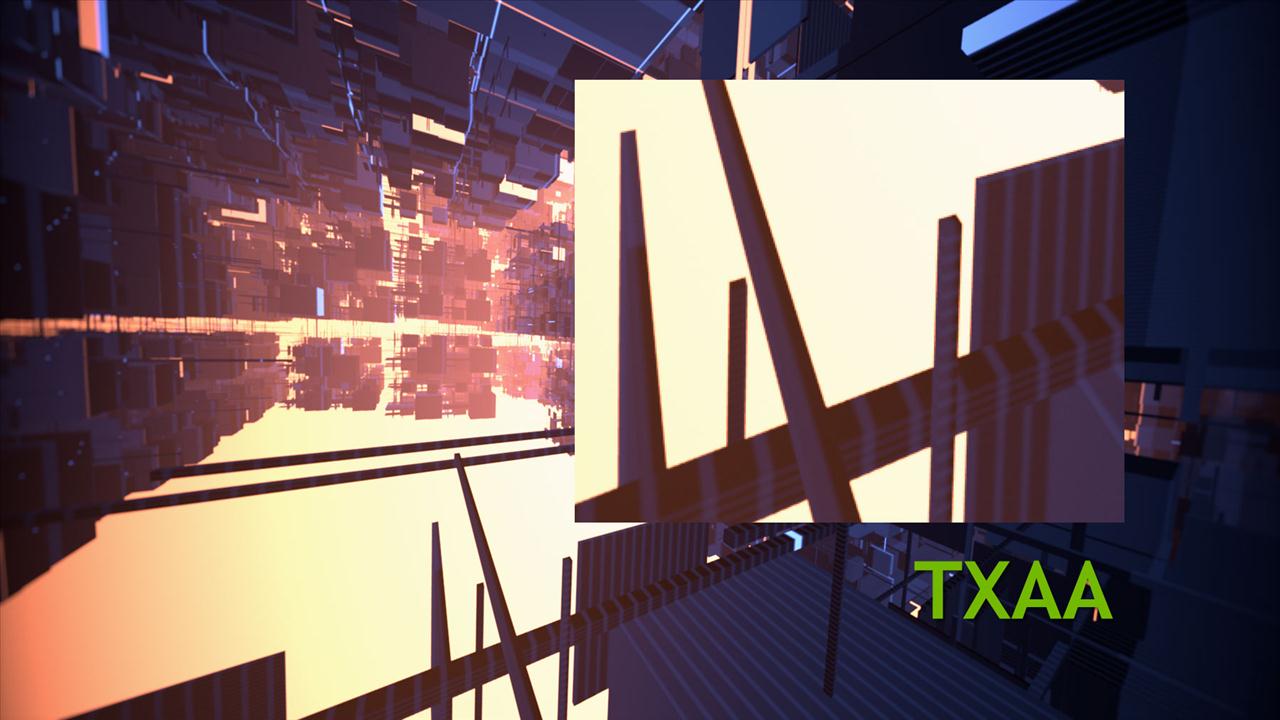

TXAA or temporal AA is a brand new algorithm for anti-aliasing from NVIDIA. This isn't like FXAA where you apply it at the end of the post processing so it needs to be implemented in the game itself. With TXAA level 1, you get better quality than 8x MSAA at a performance of 2x MSAA. Going to level 2 will net you even better results than x MSAA at the cost of 4x MSAA speed. Shots of TXAA versus 8x MSAA shows how much crisper it is using the new algorithm. It's too bad that it needs to be implemented in game in order to be used. Some games that will have this feature though are Borderlands 2, The Secret World, Mechwarrior Online, Eve Online, and companies like Epic will include it in their Unreal engine.

Using VSYNC helps eliminate tearing, but if your frame rate drops below your refresh rate, i.e. 60 to 30, you'll get a stutter. The jumping back and forth from 30 and 60 will introduce stuttering that can get pretty annoying. With Adaptive VSYNC from NVIDIA, it turns off VSYNC when the frame rate gets below 60 and turns it back on with 60 and above. Adaptive VSYNC would provide a better experience over regular VSYNC and as NVIDIA puts it, there's no reason to use regular VSYNC with this option.

The GeForce GTX 680 can now drive 4 displays from a single card so you can use it to run 3D Vision Surround sound with three monitors. Additionally, you can run 3 displays for surround and have a fourth monitor for stuff like chatting or email, which is actually kind of a cool feature. To drive four displays, the card features 2 dual link DVI connectors, a display port 1.2, and one full size HDMI connector.

When driving multiple displays,a feature called Bezel Peeking allows you to see images behind the bezel. If you use correction, you can lose menus behind the bezel. With a hot key, you can turn off bezel correction letting you see the full image. Hit the hot key again and back to bezel corrected mode you go. It should help things like RPGs where you can have some of the inventory items hidden behind the bezel.

PhysX is one of the premium features you have access to when you purchase a NVIDIA video card. Borderlands 2 takes advantage of PhysX as well as many other hot upcoming titles. New PhysX modules will be available this Fall for developers to help ease the time needed to develop certain features. Fracture lets you break apart an object automatically instead of having to individually design the parts that are broken apart. It also randomly breaks the object rather than being static. That will save artists a lot of time in not having to design the assets to show a broken apart object.

Fur is another module that will let you attach fur to characters. Each individual strand of hair is modeled offering up realistic hair depiction and movement.These two should offer up some nice visual improvements once they are implemented in future games.

NVIDIA is also making sure they have Day 1 support for the best features on the best games available. From PhysX to 3D, NVIDIA is at the forefront in trying to make sure the AAA titles are working with as many features as available out of the box.

So there you have it. 1536 CUDA cores, base clock of 1006MHz, boost of 1058MHz with 2GB of 256-bit GDD5 ram with a speed of 6.0Gbps. The GeForce GTX 680 is launching today and is available for $499. If you're waiting for the next great card from NVIDIA, here it is.

They're aiming for speed and efficiency for this card. The new architecture for the card is the SMX architecture. The 580 was made up of an SM module with 32 cores and a control logic. Control logic located at the top figures out where to route the instructions and to which core.

The SMX architecture offers 2X performance per watt over the previous generation card. The space that the control logic took up has been reduced greatly and cores have been upped to 192 cores. 8 of these SMX units are on the GTX 680 giving you a total of 1536 cores.

The design was improved with high efficiency embedded heat pipes, a custom fin stack shaped for better air flow, and acoustic dampening materials in the fan. The fin design allows for making the board shorter, which is very much appreciated as I as getting really tired of these long high end cards taking up so much room in my computer case. They spent a good amount of time on the board design to not only make it powerful, but keep it cool, quiet, and short.

Epic showed off the Samaritan demo using 3 GeForce GTX 580s back in GDC 2011. The demo shown at GDC 2012 now used a single GeForce GTX 680. The 3 GTX 580 cards used 732W of power and generated heat of 2500BTUs with a noise level of 51dBA. One GTX 680 used 195W of power generating 660BTUs and a noise level of 46dBA; a vast improvement. The combination of architecture, some software optimizations, and working with Epic allowed for the same visual quality but using a single card.

The GTX 580 needed an 8 pin and a 6 pin connector using 250W TDP. The GeForce GTX 680 needs 2 six pin connectors so less power needed for a much more powerful card. NVIDIA recommends a 550W power supply, so you don't have to go break the bank on purchasing one to use with this card.

On average, NVIDIA is saying the GeForce GTX 680 has a 20-40% performance increase over AMD's Radeon HD7970 while consuming up to 40% less power. It remains to be seen if this is true, but if it comes close to that claim, then it's a big win for NVIDIA.

To help improve performance, NVIDIA GPU Boost is something new added for this card. At a high level, it automatically boosts the clock speed. So no more fixed GPU clock and based on power in game, they are able to boost it up. Previously, they measured the power for various games and set the clock, the fixed clock, based on the worst case scenario. Most of the time it's not even a game, but a benchmark that uses the most power. What you would get is setting a clock based on a benchmark and when you play games, your power is often significantly less. On previous cards, you had extra power budget wasted by not being able to increase your clock. The ramping up of the clocks can utilize the unused power budget to offer more performance. It just works and no profile is needed by measuring a bunch of parameters dynamically in the game.You can still overclock on your own if you want it so for those that want to tweak it more, you still have that option.

Let's talk a little bit about picture quality. Anti-aliasing is used to fix the jagged areas in a given image. FXAA was an anti-aliasing technology from NVIDIA that debuted last year. MSAA is what was used mostly and does a great job, but not when where there are high contrast areas. Say you have a dark object in front of a bright background. You'll see the jagged edges a lot more with MSAA. With FXAA, the smoothing is improved and the performance hit is significantly less than turning on 4x MSAA. NVIDIA is claiming that you'll get 60% speed over 4x MSAA when using FXAA. Some games have implemented FXAA in games, but with release 300 drivers, FXAA is available in the control panel so you can use it for any game that you want, rather than those that just programmed into it.

TXAA or temporal AA is a brand new algorithm for anti-aliasing from NVIDIA. This isn't like FXAA where you apply it at the end of the post processing so it needs to be implemented in the game itself. With TXAA level 1, you get better quality than 8x MSAA at a performance of 2x MSAA. Going to level 2 will net you even better results than x MSAA at the cost of 4x MSAA speed. Shots of TXAA versus 8x MSAA shows how much crisper it is using the new algorithm. It's too bad that it needs to be implemented in game in order to be used. Some games that will have this feature though are Borderlands 2, The Secret World, Mechwarrior Online, Eve Online, and companies like Epic will include it in their Unreal engine.

Using VSYNC helps eliminate tearing, but if your frame rate drops below your refresh rate, i.e. 60 to 30, you'll get a stutter. The jumping back and forth from 30 and 60 will introduce stuttering that can get pretty annoying. With Adaptive VSYNC from NVIDIA, it turns off VSYNC when the frame rate gets below 60 and turns it back on with 60 and above. Adaptive VSYNC would provide a better experience over regular VSYNC and as NVIDIA puts it, there's no reason to use regular VSYNC with this option.

The GeForce GTX 680 can now drive 4 displays from a single card so you can use it to run 3D Vision Surround sound with three monitors. Additionally, you can run 3 displays for surround and have a fourth monitor for stuff like chatting or email, which is actually kind of a cool feature. To drive four displays, the card features 2 dual link DVI connectors, a display port 1.2, and one full size HDMI connector.

When driving multiple displays,a feature called Bezel Peeking allows you to see images behind the bezel. If you use correction, you can lose menus behind the bezel. With a hot key, you can turn off bezel correction letting you see the full image. Hit the hot key again and back to bezel corrected mode you go. It should help things like RPGs where you can have some of the inventory items hidden behind the bezel.

PhysX is one of the premium features you have access to when you purchase a NVIDIA video card. Borderlands 2 takes advantage of PhysX as well as many other hot upcoming titles. New PhysX modules will be available this Fall for developers to help ease the time needed to develop certain features. Fracture lets you break apart an object automatically instead of having to individually design the parts that are broken apart. It also randomly breaks the object rather than being static. That will save artists a lot of time in not having to design the assets to show a broken apart object.

Fur is another module that will let you attach fur to characters. Each individual strand of hair is modeled offering up realistic hair depiction and movement.These two should offer up some nice visual improvements once they are implemented in future games.

NVIDIA is also making sure they have Day 1 support for the best features on the best games available. From PhysX to 3D, NVIDIA is at the forefront in trying to make sure the AAA titles are working with as many features as available out of the box.

So there you have it. 1536 CUDA cores, base clock of 1006MHz, boost of 1058MHz with 2GB of 256-bit GDD5 ram with a speed of 6.0Gbps. The GeForce GTX 680 is launching today and is available for $499. If you're waiting for the next great card from NVIDIA, here it is.